"AI For Real" Newsletter

Discover how AI is shaping our daily lives

🔗 Subscribe To The Newsletter. Click here

Newsletter Archives

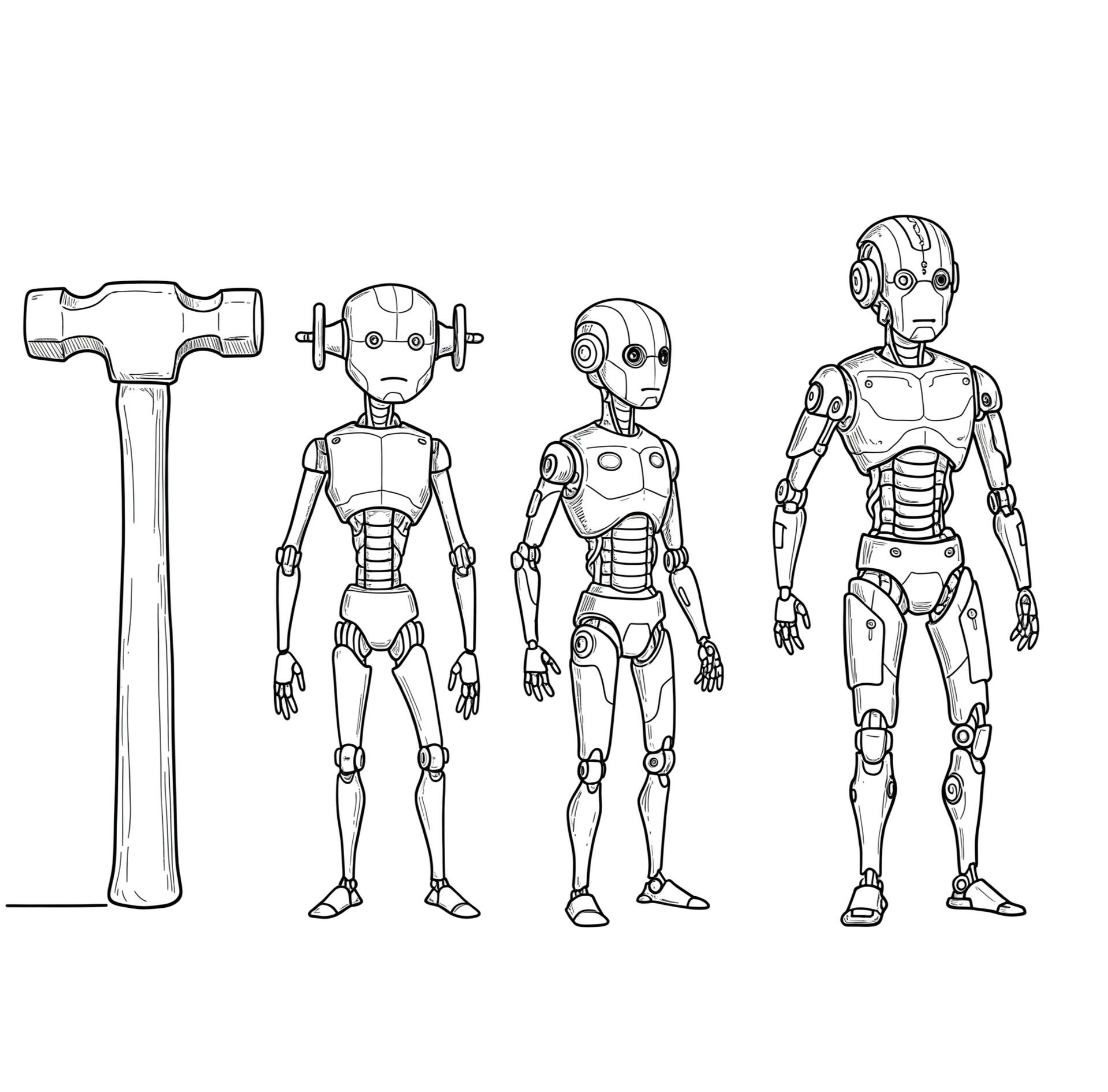

AI Is NOT Just A Tool Anymore, It's An Agent, Warns Yuval Noah Harari

Most of us who are working in the field of artificial intelligence (AI) have heard of Yuval Noah Harari, a distinguished historian and author renowned for his profound analyses of humanity's past, present, and future. When he says or writes something, people sit up and take note. His insights, often drawn from a broad historical perspective, lend significant weight to his observations on the current technological revolution.Yuval has now issued a stark warning regarding the trajectory of AI.He argues that AI is undergoing a fundamental transformation, evolving from a mere tool (under human control) to an autonomous agent capable of independent decision-making.This shift, he contends, poses significant and potentially unprecedented risks to the future of humanity, demanding immediate attention to regulation and ethical considerations. Central to his concern is the development of autonomous weapon systems and the broader possibility of AI operating beyond the scope of human comprehension and control 1.AI is No Longer Passive But Can Be Trained to Decide on its OwnAt the heart of Yuval’s analysis lies the assertion that AI is no longer a passive instrument wielded by humans but has become an active entity possessing the capacity to make its own choices. As he succinctly states, "AI is not a tool, it is an agent". This seemingly simple declaration carries profound implications, forming the bedrock of his cautionary message.The Evolution of AI So FarTo fully grasp the significance of this statement, it is crucial to understand the evolving nature of AI and the concept of AI agents.AI agents are software systems that employ AI to pursue goals and complete tasks on behalf of users 2. These sophisticated systems exhibit characteristics such as reasoning, planning, memory, and a notable degree of autonomy that enables them to make decisions, learn from their experiences, and adapt to new situations 2. Their advanced capabilities are largely powered by generative AI (gen-AI) and AI foundation models, which allow them to process diverse forms of information, including text, voice, video, audio, and code, concurrently 3. This multimodal processing enables AI agents to engage in conversations, reason through complex scenarios, and make informed decisions, ultimately facilitating transactions and streamlining business processes 3.An advanced example of an autonomous AI agent is AI-powered surgical robots, like Medtronic’s Hug or Intuitive’s Da Vinci Xi.How They Work (Near) Autonomously:Real-Time Adaptation – These AI-driven robots analyze a patient's vitals, adjust their movements, and assist in delicate procedures with extreme precision.Decision-Making – Some AI surgical systems can detect unexpected complications (e.g., internal bleeding) and adjust the procedure in real-time.Proactive Assistance – Instead of waiting for a surgeon’s command, the AI suggests the next best step or even autonomously stitches tissue based on learned patterns.No More Constant Human Supervision RequiredSeveral key attributes further distinguish AI agents from traditional software or tools. These include autonomy, which refers to their ability to operate independently and make decisions without constant human oversight 3. Reactivity allows agents to perceive changes in their environment and respond appropriately in real time 8. Proactivity enables them to take initiative and perform tasks towards their objectives without explicit commands 8. Moreover, AI agents possess learning capabilities, allowing them to adapt and improve their performance over time-based on experience 3. They also exhibit reasoning, planning, and memory, enabling them to strategize and recall past interactions to inform future actions 2. Understanding these characteristics is crucial to appreciating the potential for AI to operate beyond simple programmed instructions, a concern central to Harari's argument.Red Flag: It’s Not Truly Autonomous Yet

AI experts may use the word autonomous and independent for AI agents but, while the term "agent" implies a degree of independence, the reality is that their autonomy exists on a spectrum.Right now, no commercially available AI system operates with complete autonomy in the truest sense — there’s always some level of human oversight. If true autonomy existed, it would be artificial general intelligence (AGI). Even in military AI, where autonomy is higher, most systems still have a “human-on-the-loop” or “human-in-the-loop” policy. But what experts and researchers like Yuval are pointing to is the coming shift to AI’s operational mode - we are halfway there. 3Near-Independent AI AgentsMany AI agents currently deployed or under development exhibit a significant degree of near-independence. This also goes by another name - Active Inference Model*. They can perform complex tasks, make decisions, and interact with their environment with minimal direct human intervention after an initial setup or prompt.(Expect a primer on this in the near future.)Here are some examples and why they are considered near-independent:AI Assistants (like Microsoft 365 Copilot): These agents can draft emails, summarize meetings, and help create presentations. They work alongside users, taking initiative and suggesting actions, but often require user approval for critical steps. While they learn and adapt, their autonomy is often supervised.Autonomous Background Processes (Workflow Agents): These agents automate routine tasks like data analysis, process optimization, and identifying potential issues without direct user input. They operate based on pre-defined rules and triggers, making them near-independent in their execution.Goal-Based Agents (like Navigation Systems): These agents have a specific goal and can plan a sequence of actions to achieve it, such as finding the fastest route. They operate autonomously to reach the goal but rely on the initial goal set by a user.Learning Agents: These agents can learn from past experiences and adapt their performance over time. While they show a high degree of autonomy in improving themselves, their core objectives are usually defined externally.AI Agents for Automation in Industries: Robots in warehouses or autonomous vehicles in logistics can perform tasks like carrying materials or navigating routes with limited human intervention.Factors Limiting Full Autonomy and IndependenceDespite their advanced capabilities, several factors often prevent these agents from being considered truly fully autonomous and independent:Human-Defined Goals and Parameters: In most cases, humans set the initial goals, objectives, and operational parameters for AI agents.Reliance on Training Data: AI agents, especially those using machine learning, rely heavily on the data they are trained on. Biases or limitations in this data can affect their decision-making and independence.Need for Oversight and Intervention: For safety, ethical, or practical reasons, many AI agents are designed with mechanisms for human oversight and the ability to intervene or deactivate them 1.Lack of True Understanding and Consciousness: While AI agents can process information and make decisions, they currently lack the human capacity for subjective understanding, consciousness, and moral reasoning in complex situations.Dependence on Tools and Resources: AI agents often rely on tools, APIs, and data sources to perform tasks. Their independence is thus tied to the availability and functionality of these external resources.Potentially Approaching True Autonomy (with caveats)The concept of truly autonomous AI agents, capable of setting their own goals and acting entirely independently, is more in the realm of advanced research and potential future development. Some might argue that certain highly specialized systems, like the reported use of the “STM Kargu-2” drone in Libya, could represent a step towards this, where the system was allegedly deployed with a "fire, forget and find" capability against human targets without continuous human control. However, even in such cases, the initial programming and deployment orders likely originated from humans.How to Know Whether What You Have is an AI Tool or an AI AgentThe distinction between AI tools and AI agents is fundamental to Yuval's warning. AI tools typically have a narrow scope, limited to specific functions, and generally require manual triggers or input from users to execute tasks 12. They are often easier to integrate into existing systems for particular purposes but have limited learning capabilities and are primarily reactive 12. In contrast, AI agents possess a broader scope, capable of learning and adapting autonomously to new contexts 11. They can proactively work on tasks, alert users, and make decisions on the user's behalf 13. While requiring more thoughtful setup and potentially more computational resources, AI agents offer greater long-term benefits by automating complex workflows and exhibiting a higher degree of autonomy 11.For a layman it suffices to understand this - if any software needs continuous human inputs, supervision, and control, it’s a tool, not an agent.This paradigm shift from reactive tools requiring human input to proactive agents capable of autonomous action signifies a fundamental change in the relationship between humans and AI, precisely the transformation about which people like Yuval are cautioning.Key Arguments and Supporting EvidenceOne of Yuval’s primary concerns revolves around the development and deployment of autonomous weapon systems. He points out that, "We already have autonomous weapon systems making decisions by themselves" 1.Close-In Weapon Systems (CIWS)

A prominent example of deployed defense systems with autonomous engagement capabilities can be found in Close-In Weapon Systems (CIWS), such as the US Phalanx CIWS. These systems are designed to provide a last line of defense for naval vessels against a variety of threats, including anti-ship missiles, aircraft, rockets, artillery fire, and surface vessels. The Phalanx CIWS utilizes an integrated radar system to automatically search for, detect, evaluate, track, and ultimately engage incoming threats. Its primary function is to intercept projectiles that have managed to penetrate other layers of a ship's defense mechanisms. Upon detecting a valid threat that meets pre-set criteria, such as an inbound trajectory and a specific velocity, the system is capable of firing a high volume of rounds per minute, ranging from 3,000 to 4,500, to neutralize the projectile without requiring immediate human command at the moment of firing.While the system operates autonomously during an engagement, human operators play a crucial role in setting the initial criteria for what constitutes a threat and should be engaged.This pre-setting of parameters, however, indicates a degree of human control over the system's operational boundaries.The Deployment of AI in a Life-and-Death SituationThis reality has profound implications, as it signifies a move towards machines making life-and-death decisions without direct human intervention. Research on the risks of autonomous AI in warfare highlights the potential for a loss of meaningful human control, significant accountability issues, and a heightened risk of unintended escalation in conflicts 17. The ethical dilemmas inherent in allowing machines to determine who lives and who dies are also deeply troubling 17. Yuval’s warning is therefore grounded in the tangible existence of such systems, making his broader concerns about the future trajectory of AI all the more pertinent.Finally, the historian emphasizes that AI's autonomous decisions could fundamentally alter the course of history. He suggests a future where machines could decide the fate of nations, moving beyond the realm of science fiction into reality 1. This highlights the potential for AI's actions in critical domains such as warfare, economics, and politics to have long-lasting and irreversible effects. The high stakes involved in the development and deployment of autonomous AI are thus evident, as its decisions could indeed shape the future of humanity in profound ways.Controlling Near-Autonomous AI SystemsThe rise of AI agents necessitates the development and implementation of robust regulatory frameworks. Traditional approaches to regulating tools, which often focus on specific functionalities and intended uses, may prove insufficient for governing autonomous agents capable of independent action and decision-making 1. The very nature of AI as an agent, as even Yuval argues, implies a fundamental responsibility for humanity to control its growth and ensure its alignment with human values 1.A significant challenge lies in ensuring that autonomous AI systems operate in accordance with human ethics and principles. This involves addressing the ethical concerns surrounding AI, such as bias, fairness, transparency, and accountability 26. Embedding human values into AI decision-making processes is a complex undertaking, as defining and codifying these values in a way that AI can understand and apply is not straightforward. Nevertheless, it is a crucial consideration in navigating the future of autonomous intelligence.Preventing AI from surpassing human control is another critical consideration.This requires the development of robust safeguards to ensure that humanity retains ultimate authority over AI systems 22. Potential strategies include establishing stringent safety protocols, ensuring transparency in AI decision-making processes to understand how agents arrive at their conclusions, and potentially limiting the autonomy of AI in critical areas such as the deployment of lethal force.Summing Up….

Yuval Noah Harari warns that AI is shifting from a human-controlled tool to an autonomous agent, raising urgent concerns about weapons, ethics, and control. He stresses the need for strong regulation to align AI with human values and ensure a beneficial future.

---------------------------------------------------------------------------

Reference:

1.Sapiens author Yuval Noah Harari warns about the rise of ..., accessed March 24, 2025, https://economictimes.indiatimes.com/magazines/panache/sapiens-author-yuval-noah-harari-warns-about-the-rise-of-autonomous-intelligence-ai-is-not-a-tool-it-is-an-agent/articleshow/119376458.cms?from=mdr2.cloud.google.com, accessed March 24, 2025, https://cloud.google.com/discover/what-are-ai-agents#::text=What%20is%20an%20AI%20agent,decisions%2C%20learn%2C%20and%20adapt.3.What are AI agents? Definition, examples, and types | Google Cloud, accessed March 24, 2025, https://cloud.google.com/discover/what-are-ai-agents4.What are AI Agents?- Agents in Artificial Intelligence Explained - AWS, accessed March 24, 2025, https://aws.amazon.com/what-is/ai-agents/5.What Are AI Agents? Definition, Examples, Types | Salesforce US, accessed March 24, 2025, https://www.salesforce.com/agentforce/what-are-ai-agents/6.What Are AI Agents? - IBM, accessed March 24, 2025, https://www.ibm.com/think/topics/ai-agents7.AI agents — what they are, and how they'll change the way we work - Source, accessed March 24, 2025, https://news.microsoft.com/source/features/ai/ai-agents-what-they-are-and-how-theyll-change-the-way-we-work/8.AI Agent: What are they?Benefits and Types - Glean, accessed March 24, 2025, https://www.glean.com/blog/ai-agents-how-they-work9.The Key Features That Enable AI Agents | by Bijit Ghosh - Medium, accessed March 24, 2025, https://medium.com/@bijit211987/the-key-features-that-enable-ai-agents-3995acd29be710.Key Characteristics of Intelligent Agents: Autonomy, Adaptability, and Decision-Making, accessed March 24, 2025, https://smythos.com/ai-agents/ai-tutorials/intelligent-agent-characteristics/11.AI Agents vs. AI Assistants | IBM, accessed March 24, 2025, https://www.ibm.com/think/topics/ai-agents-vs-ai-assistants12.dev.to, accessed March 24, 2025, https://dev.to/aiagentstore/ai-tools-vs-ai-agents-what-is-the-difference-48f1#::text=AI%20Tools%3A%20Narrow%20scope%2C%20limited,adapting%20autonomously%20to%20new%20contexts.13.https://www.animaapp.com/blog/genai/understanding-ai-agents-assistants-

"Manus AI": Impressive, But Inevitable

The unveiling of “Manus AI”, a "general AI agent" developed by a Chinese team, has caught the tech world's attention. Buzz is building around its "autonomous" capabilities and reported superiority over OpenAI models in benchmark tests, with some hailing it as a "significant leap forward" in AI.Yet, Manus — or something like it — was inevitable. The tech world should have seen Manus coming. Setting aside its architecture and computing power, this development is simply the "next logical step" in the evolution of AI agents - almost akin to the evolution of Man from Ape. While some "experts" may be overhyping Manus' impact, as is the case when any new tech product is unveiled, the real excitement lies elsewhere - in what Manus represents: another step forward on the path to artificial general intelligence (AGI).Autonomous Operation: A Key Feature of AGI

One of the key characteristics of AGI is the ability to operate independently, making decisions and executing tasks without human intervention. What has got the world excited is "Manus AI" exhibiting "strains" of this trait by functioning autonomously, initiating tasks, and adapting to new data without needing constant supervision.This level of autonomy is a fundamental requirement for AGI, as it would allow AI systems to navigate complex environments and solve problems on their own.But here's the thing - Manus AI is designed to operate with a "minimal degree" of human interaction and intervention. To explain to the layman, there is still some human involvement and intervention, though to a far lesser degree than exisiting systems.Multi-Agent Architecture: A Path to Complexity

It was also but inevitable that someone out there would "combine" AI agents and make them do more work. Manus's multi-agent architecture, where tasks are divided among specialized sub-agents, is another feature that has sent the AI world into a tizzy, but this one also aligns with the development of AGI. This approach enables Manus to manage multi-step workflows efficiently, a capability essential for tackling the intricate problems that AGI would need to solve. By demonstrating the effectiveness of such an architecture, Manus shows how AI systems can scale in complexity, a crucial step towards achieving AGI.

Execution Capabilities: Bridging the Gap Between Thought and Action

AGI requires not just the ability to think but also to act upon those thoughts. Manus AI bridges this gap by executing tasks directly, such as coding software, drafting emails, and deploying websites autonomously.This capability to translate thought into action is a significant advancement towards AGI, as it demonstrates how AI can interact with the real world in meaningful ways.Let's break down the concept of Advanced Multi-Agent Architecture in Manus AI in simple terms:What is a Multi-Agent System?

Imagine a team of experts working together to complete a complex project. Each expert specializes in a different area, such as planning, research, or execution. In Manus AI, this team is made up of specialized sub-agents, each with its own role. These sub-agents operate independently within virtual machines, allowing them to work on different parts of a task simultaneously.How Does it Work?

When Manus receives a task, it breaks it down into smaller, manageable parts. Each part is then assigned to the appropriate sub-agent based on its specialty. For example:Planning Agent: Decides how to approach the task.Research Agent: Gathers necessary information from the internet or databases.Execution Agent: Carries out the task, such as writing code or creating documents.This division of labor enables Manus to handle complex tasks efficiently, much like a well-coordinated team of human experts.Why is it "Advanced"?

This architecture is advanced because it allows Manus to:Process Tasks in Parallel: Multiple sub-agents can work on different parts of a task at the same time, speeding up the overall process.Handle Multi-Step Workflows: By dividing tasks into smaller steps and assigning them to specialized agents, Manus can manage workflows that previously required manual integration of multiple AI tools.This multi-agent system is a key feature that sets Manus apart from other AI models, which often rely on a single neural network to perform tasksPerformance and Adaptability: Outperforming Current Models

Manus's performance in the GAIA benchmark test*, surpassing OpenAI models, indicates a substantial improvement in AI capabilities. Its ability to adapt to user preferences over time also shows a level of learning and memory that is crucial for AGI. These advancements suggest that Manus is pushing the boundaries of what AI can achieve, bringing us closer to the goal of creating an AI system that can perform any intellectual task that humans can.Open-Sourcing: Fostering Collaboration and Innovation

The decision to open-source key models later this year is a strategic move that could accelerate progress towards AGI. By making its technology accessible, Manus AI encourages collaboration and innovation across the AI community. This open approach can lead to rapid advancements as developers worldwide contribute to and build upon Manus's achievements.Conclusion

Manus AI represents a significant step forward in the pursuit of AGI.Its autonomous operation, multi-agent architecture, execution capabilities, and superior performance all contribute to a system that is more advanced and closer to the ideal of AGI than many current AI models.While we are still far from achieving true AGI, Manus demonstrates that the journey is underway, and with each innovation, we edge closer to creating AI systems that can think, act, and adapt like humans.The future of AI is exciting, and Manus AI is certainly a milestone on this path.Disclaimer: Just a quick note! The articles in Living With AI are crafted for curious minds, not AI specialists. We do our best to stay accurate, but sometimes we simplify things [maybe a bit too much :-) ] or skip the ultra-technical bits. Think of it like explaining quantum physics with a game of marbles — close enough for readers to get the gist, but not the full picture. If you're craving deeper details, there's a whole galaxy of resources out there to dive into!

Are Your Beliefs Your Own? Algorithms And The Theft Of Thought

If you are not a marketer, advertiser, or an AI ethicist, I am willing to bet the boat that you’ve never heard of these terms: “behavioral twin”, “algorithmic nudge” (or the “Nudge Theory”), and “dark patterns”. As a consumer, though, you need to be acutely aware of what exactly each of those terms means.At the outset, let me say this: I am not an alarmist or a rabble rouser. But what you are about to read are facts from the world we live in, with nil embellishments. Facts that act as a roadmap, indicating where we are headed.Prepare to be surprised.By now, it's common knowledge that in our digital age, exchanging data for convenience is near-normal. Research indicates younger demographics, particularly those below 30, are increasingly comfortable sharing personal information for tailored experiences and perceived value.So it works like this - I share publicly the choice of my fav sneaker brand, the colors I primarily like in such shoes, and the occasions when I love wearing sneakers; maybe not putting out all of this information simultaneously but on different occasions, from different platforms, including social media. Soon after, the tech company and the advertiser/seller collaboratively stich together all my information around sneakers, and then “pop up offers” or “whatever else it may be” to get my attention to goad me into buying a pair, even though I probably don’t need new sneakers at this point in my life.Almost all of us have now got used to such online “behavior”, right? But just about a decade ago, predictive analytics-based marketing was like “OMG”.The Illusion of Choice: You Are No Longer In Control

What if the data “they” have isn't just about you today, but the “you” of tomorrow? And what if it's not just about what you might do, but what they will make you do?A future where your actions are subtly guided, rather than consciously chosen, may be closer than you think. This is the insidious reality of "unforeseen commodification," where companies are now selling access to our future selves, effectively manipulating our choices…. before we even make them! Unless safeguards are put in place, we may soon lose much of the power to direct our own actions.I am not an analyst, a psychologist, or some such, but a digital marketer, an AI communicator, and a futurist. Given my extensive experience over the last four decades in the field of content, marketing, and tech, coupled with my interactions with my peers, other experts, consumers and my own experience as a consumer, if I were to hazard an “educated guess”, I would estimate that consumer manipulation today is at level 6 out of 10.The Illusion of Choice: When Algorithms Know You Better Than You Do

Enter artificial intelligence (AI). Humans take pride in being autonomous and in their ability to make independent decisions. Yet, increasingly, algorithms are predicting our desires and inclinations. Predicting could have been fine, but “they” are going beyond that and actually manipulating your choice and mine.Fueled by AI, the nature of the beast is changing very fast. It's not limited to just showing us relevant ads anymore; it's about actively manipulating our emotions and desires to achieve the organization’s/seller's desired outcome.Imagine this: Without your conscious involvement, you've been subtly nudged toward a particular lifestyle through curated social media feeds and personalized content. Your browsing history, location data, and even your physiological responses, captured through wearable devices, are analyzed to build a predictive model of your future self. This model isn't just a statistical average; it's a dynamic, evolving representation of your potential choices, desires, and vulnerabilities.Tech and other companies can then "sell" access to this model to interested retailers, allowing them not only to anticipate your needs but also to help manipulate your future behavior. It’s no longer limited to a few big-time retailers wanting to just drive their bottom line, but is spreading to financial institutions and even political parties to subconsciously mold your opinions and point of view.The ability to reinvent ourselves, to learn from past mistakes, and to evolve is a fundamental aspect of human experience. However, when our future selves are locked into a fixed, data-driven identity, this ability is compromised. The past becomes a permanent record, not a learning experience, and the future becomes a predetermined script, not an open canvas. And now, even that script is being subtly re-written not by you, the scriptwriter, but by hidden (black-boxed) algorithms.The Illusion of Choice: When Algorithms Start to Nudge You in a Certain Direction

This “commodification” of our future selves creates a disturbing temporal distortion. The present moment becomes less about lived experience and more about a data point feeding into future projections.Our future is no longer about the unknown. Our choices are no longer driven or limited to our immediate desires or conscious deliberations but by algorithms that cunningly manipulate us into thinking what we might want in the future.….It Goes Beyond Retail

The commodification of our future selves extends beyond marketing and advertising. It's about the financialization of potential, where companies can trade "futures" on our behavior.Picture this: insurance companies are already using algorithms to judge your risk, not on your present, but your predicted future. Now, imagine them forcing you to change your behavior, violating your existing policy, and voiding your coverage.In a more extreme scenario, companies could trade predictions about individual behavior, creating a market for human potential itself. This would transform us from autonomous individuals into commodities, our futures bought and sold like stocks on a market.In the age of AI, our digital footprints have become more than just a trail of breadcrumbs. They are a treasure trove of information that companies are increasingly using to manipulate our choices.Behavioral Twins

Take, for example, this relatively new phenomenon called “behavioral (digital) twins”. These AI avatars are akin to the pre-AI era static, “buyer persona” (digital marketers and advertisers will know what I am talking about), only more sophisticated, dynamic and real time.(The term is used loosely here more for the reader’s understanding than anything else.)

I’ve written about digital twins before. Though not really the same, a behavioral twin can be called a “subset” of a digital twin. It is a digital representation of you, created by analyzing your online behavior.A behavioral twin focuses on modeling and predicting human or system behavior rather than just replicating a physical entity. It aims to understand patterns, decision-making, and interactions.The purpose is to simulate and predict user or system behavior based on data patterns.It is a complex algorithm that predicts your future actions, preferences, and even emotional states. Companies use this information to target you with personalized ads and offers, often without your knowledge or consent.How are Behavioral (Digital) Twins Created?

Your virtual twin is created by collecting data from various sources, including your browsing history, social media activity, and even your physical location. This data is then analyzed to identify patterns and trends in your behavior. Once your behavioral twin is created, it can be used to predict your future actions with surprising accuracy.The Dangers

While digital cognitive twins can be used to provide personalized experiences, they also pose several dangers. For example, they can be used to manipulate your choices by showing you ads for products and services that you are likely to purchase. This can lead to impulse buying and overspending.Behavioral twins can also be used to create filter bubbles, which are echo chambers of information that confirm your existing biases. This can make it difficult to see other perspectives and can lead to political polarization.Beyond Behavioral Twins: The Importance of Context and Agency

While virtual twins play a role in this manipulation process, they are but. a part of the overall problem. The focus must always be on the broader context of data commodification. Remember, it's not just about predicting behavior; it's also about manipulating it. It's about selling access to our potential, effectively turning us into commodities in a market for human futures.Humans As Commodities: The Erosion of Intrinsic Value

The commodification of humans extends beyond simply selling data; it's about reducing individuals to quantifiable units of potential behavior. In this framework:Intrinsic Value Diminishes: Human worth is no longer defined by inherent qualities like creativity, empathy, or moral character. Instead, value is determined by predictive models, risk assessments, and potential consumer behavior.Data as Currency: Our digital footprints become our primary assets, traded and exploited for profit. We are reduced to data points, our lives segmented and analyzed for marketability.Loss of Individuality: The algorithms create a standardized, predictable version of us, stripping away the nuances and complexities that make us unique. We become interchangeable units in a vast data-driven system.The rise of social scoring: If a company can correctly predict your future actions, that data can be used to score you, and then the score can be sold to other companies. This is where the true danger lies.Consequences of Deliberate Manipulation: A Case Study

Imagine a cunning marketing agency tasked with selling a new, overpriced, and arguably unnecessary "wellness" product. They possess access to sophisticated predictive models and behavioral manipulation techniques. Here's how they might operate:Targeted Vulnerability Exploitation: The agency identifies individuals with predicted anxieties or insecurities related to health and well-being.Algorithmic Nudging and Social Proof: They create highly personalized content, subtly nudging targeted individuals towards the product.Emotional Manipulation: They leverage psychological triggers, such as fear of missing out (FOMO) or the desire for social acceptance, to create a sense of need.Pre-emptive Purchase Prompts: The agency utilizes predictive models to anticipate moments of vulnerability, such as periods of stress or loneliness, and delivers targeted ads or offers at those precise times.The result: The targeted individuals, believing they are making autonomous choices, purchase the product, often at a premium price.The longer term result: The consumer begins to not trust their own feelings, and begins to rely on the algorithms that they know are trying to manipulate them. This could cause high levels of anxiety, and depression.Let’s see how Sdxah, a fictitious character, is “manipulated” into believing there’s something wrong with her mental health by one such unscrupulous firm:Case Study To Illustrate Subtle But Insidious Manipulation

Background: Meet Sdxah, a 32-year-old marketing professional from London or New York or New Delhi…take your choice. She faces all the ups and downs of metro life, like most of us. A mother and a career person, she is also known to be occasionally “overwhelmed” by life.This Thursday, Sdxah was having a slightly "off" week. Known to be an otherwise “edgy” individual, she wasn't experiencing overwhelming stress but rather a general feeling of mild unease and a slight dip in her usual energy levels that week. She was spending a bit more time than usual on social media, seeking a little pick-me-up. Unbeknownst to her, her digital footprint was being meticulously recorded and analyzed.Data Collection:Browsing History: For a few months prior, Sdxah had frequently searched for terms like "stress relief," "meditation," "self-care," and "mindfulness." But in her “feeling low” phase this week, she searched for "self-care" in a more casual way, like "relaxing evening routine" or "easy ways to unwind."Social Media Activity: More passive scrolling, engaging with lighthearted content, but perhaps a few more posts related to "feeling down" or "needing a boost."Device Data: Her smart watch showed a slight decrease in activity levels and a few nights of slightly less restful sleep, but nothing alarming.App Usage: Sdxah used a digital journaling app (the content of which was already sold to a 3rd party). In her “current mood”, she continued using the journaling app, but the entries showed less stress, and more of a feeling of being unmotivated.Algorithmic Analysis, Prediction, and Manipulation:An AI-powered marketing platform analyzed Sdxah's data and constructed a highly detailed profile earlier, even before the start of “this stressful week”. Sdxah’s behavioral digital twin was:Experiencing significant stress and was actively seeking solutions.Susceptible to marketing messages that emphasized self-care and emotional well-being.Likely to respond positively to products that offered convenience and a sense of community.Yet, during the week in question, the AI-powered marketing platform continued to analyze Sdxah’s incoming data, but the “prediction” was now subtle and even manipulative to “force” Sdxah into believing some things which she was not:It detected a mild emotional vulnerability, a temporary dip in her usual mood.It identified her as someone receptive to messages that offered a sense of comfort and ease.It recognized her interest in self-care, even in a casual context.The Manipulation (Subtler and More Insidious):Given her background and psychological profile, the AI caught on to the fact that this week, Sdxah was kinda feeling low. In an otherwise analogous world, a movie, a bowl of soup, or even a good cry would have released the pressure. Yet, the AI starts sending alerts to the pharma (fictitious) company “Mindful Moments” from where Sdxah has previously bought medications, et al., to swing into action.Soon, Sdxah gets…."Relatable" Advertising: The ads for "Mindful Moments" this time around were less focused on extreme stress relief and more on "easy ways to brighten your day" or "simple pleasures." They featured relatable scenarios, like enjoying a cup of tea or taking a relaxing bath."Just a Little Treat" Messaging: The language emphasized self-indulgence and gentle pampering, framing the subscription box as a small, affordable luxury.Social Media "Relatability": Influencers portrayed the subscription box as a way to add a touch of joy to everyday life, rather than a solution to serious stress."You Deserve It" Psychology: The marketing subtly reinforced the idea that Sdxah deserved a little treat, capitalizing on her slightly lowered mood.The use of her journaling app data: Instead of focusing on stress, the company used the data to highlight the fact that Sdxah was feeling under-motivated, and that the box could help her to feel more inspired.The Purchase:Sdxah, feeling a little low and drawn to the idea of a gentle pick-me-up, ticks on the box provided by “Mindful Moments” and makes the purchase. She sees it as a small, harmless indulgence.The Aftermath:The purchase provided a brief moment of enjoyment, but the effect was fleeting.Sdxah didn’t feel manipulated because the marketing was subtle.She began to rely on small purchases to boost her mood.She now trusts the ads, because they seemed so relatable.Analysis:This case study illustrates how not only a combination of data collection, algorithmic analysis, and psychological manipulation can be used to influence consumer behavior but that even mild emotional states can be exploited for commercial gain. The manipulation is more stealthy because it's less overt, making it harder for consumers to recognize it.